The breadth of online collaborative tools that are emerging today is quite simply staggering! A number of websites have appeared offering specific product offerings, or hosting a multitude of collaborative tools (wiki's, blogs, forums, repositories, communication tools etc.) from which to work. What is so promising is the versatility and sophistication of products such as Thinkfold and Mindmeister, with niche products serving every kind of need imaginable. Crucially, many of these tools now offer real-time co-editing of documents (changes appear in real-time for all users), multiple user capability, rich timeline of changes and additional communication tools etc.

In the near future, we will likely see some consolidation and more compatibility amongst these products, as well as platforms which (on the fly) integrate tools into a single collaborative online environment from which to work.

In the meantime, Robin good , a social media guru, has put together a continually updated mindmap of online collaborative tools currently available at; http://www.mindmeister.com/maps/show_public/12213323 . The map offers an impressive list broken down by category of sites offering collaborative tools. They really are worth checking out!!

Copyright © 2006-2008 Shane McLoughlin. This article may not be resold or redistributed without prior written permission.

Tuesday, May 26, 2009

Monday, May 25, 2009

Searching on the web; the new breed of search engines

There has been alot of talk recently (on the web and elsewhere) about the next generation of "smarter" search engines. Below are examples of search engines which have recently gained coverage over their ability to either (1) structure and present data pulled from the web, (2) assign semantic filtering by quality, (3) search structured data on the web, (4) search the 'real-time' web or (5) search the 'deep web':

(1) Structure and present data pulled from the web

Wolfram Alpha

'We aim to collect and curate all objective data; implement every known model, method, and algorithm; and make it possible to compute whatever can be computed about anything. Our goal is to build on the achievements of science and other systematizations of knowledge to provide a single source that can be relied on by everyone for definitive answers to factual queries.' (Wolfram, 2009) http://www.wolframalpha.com/

Google Squared

'Google Squared doesn't find webpages about your topic — instead, it automatically fetches and organizes facts from across the Internet.' (Google, 2009) It extracts data from relevant webpages and presents them in squared frames on a results page.

http://squared.google.com/

Bing

Bing is built to 'go beyond today's search experience' through recognising content and adapting to your query types, providing results which are "decision driven". According to the company; "we set out to create a new type of search experience with improvements in three key areas: (1) Delivering great search results and one-click access to relevant information, (2) Creating a more organized search experience, (3) Simplifying tasks and providing tools that enable insight about key decisions." (Microsoft, 2009)

http://www.bing.com/

http://www.discoverbing.com/

Sensebot

'SenseBot delivers a summary in response to your search query instead of a collection of links to Web pages. SenseBot parses results from the Web and prepares a text summary of them. The summary serves as a digest on the topic of your query, blending together the most significant and relevant aspects of the search results. The summary itself becomes the main result of your search...Sensebot attempts to understand what the result pages are about. It uses text mining to parse Web pages and identify their key semantic concepts. It then performs multidocument summarization of content to produce a coherent summary' (Sensebot, 2008)

http://www.sensebot.net/

(2) Provide more semantic filtering of information by quality

Hakia

'Hakia’s semantic technology provides a new search experience that is focused on quality, not popularity. hakia’s quality search results satisfy three criteria simultaneously: They (1) come from credible Web sites recommended by librarians, (2) represent the most recent information available, and (3) remain absolutely relevant to the query' (Hakia, 2009)

http://www.hakia.com/

(3) Search structured data on the web

SWSE

' There is already a lot of data out there which conforms to the proposed SW standards (e.g. RDF and OWL). Small vertical vocabularies and ontologies have emerged, and the community of people using these is growing daily. People publish descriptions about themselves using FOAF (Friend of a Friend), news providers publish newsfeeds in RSS (RDF Site Summary), and pictures are being annotated using various RDF vocabularies. [SWSE is] service which continuously explores and indexes the Semantic Web and provides an easy-to-use interface through which users can find the data they are looking for. We are therefore developing a Semantic Web Search Engine' (SWSE, 2009)

http://swse.deri.org/

Swoogle

'Swoogle is a search engine for the Semantic Web on the Web. Swoogle crawl the World Wide Web for a special class of web documents called Semantic Web documents, which are written in RDF' (Swoogle, 2007)

http://swoogle.umbc.edu/

Similar offering is;

http://watson.kmi.open.ac.uk/WatsonWUI/

(4) Search the 'real-time' web

One Riot

'OneRiot crawls the links people share on Twitter, Digg and other social sharing services, then indexes the content on those pages in seconds. The end result is a search experience that allows users to find the freshest, most socially-relevant content from across the realtime web....we index our search results according to their current relevance and popularity' (Oneriot, 2009)

http://www.oneriot.com/

Scoopler

'Scoopler is a real-time search engine. We aggregate and organize content being shared on the internet as it happens, like eye-witness reports of breaking news, photos and videos from big events, and links to the hottest memes of the day. We do this by constantly indexing live updates from services including Twitter, Flickr, Digg, Delicious and more.' (Scoopler, 2009)

http://www.scoopler.com/

Collecta

'Collecta monitors the update streams of news sites, popular blogs and social media, and Flickr, so we can show you results as they happen' (Collecta. 2009).

http://www.collecta.com/

(5) Search the 'deep web'

DeepDyve

'The DeepDyve research engine uses proprietary search and indexing technology to cull rich, relevant content from thousands of journals, millions of documents, and billions of untapped Deep Web pages.' 'Researchers, students, technical professionals, business users, and other information consumers can access a wealth of untapped information that resides on the "Deep Web" – the vast majority of the Internet that is not indexed by traditional, consumer-based search engines. The DeepDyve research engine unlocks this in-depth, professional content and returns results that are not cluttered by opinion sites and irrelevant content.... The KeyPhrase™ algorithm, applies indexing techniques from the field of genomics. The algorithm matches patterns and symbols on a scale that traditional search engines cannot match, and it is perfectly suited for complex data found on the Deep Web' (Deepdyve, 2009)

http://www.deepdyve.com/

Copyright © 2006-2008 Shane McLoughlin. This article may not be resold or redistributed without prior written permission.

(1) Structure and present data pulled from the web

Wolfram Alpha

'We aim to collect and curate all objective data; implement every known model, method, and algorithm; and make it possible to compute whatever can be computed about anything. Our goal is to build on the achievements of science and other systematizations of knowledge to provide a single source that can be relied on by everyone for definitive answers to factual queries.' (Wolfram, 2009) http://www.wolframalpha.com/

Google Squared

'Google Squared doesn't find webpages about your topic — instead, it automatically fetches and organizes facts from across the Internet.' (Google, 2009) It extracts data from relevant webpages and presents them in squared frames on a results page.

http://squared.google.com/

Bing

Bing is built to 'go beyond today's search experience' through recognising content and adapting to your query types, providing results which are "decision driven". According to the company; "we set out to create a new type of search experience with improvements in three key areas: (1) Delivering great search results and one-click access to relevant information, (2) Creating a more organized search experience, (3) Simplifying tasks and providing tools that enable insight about key decisions." (Microsoft, 2009)

http://www.bing.com/

http://www.discoverbing.com/

Sensebot

'SenseBot delivers a summary in response to your search query instead of a collection of links to Web pages. SenseBot parses results from the Web and prepares a text summary of them. The summary serves as a digest on the topic of your query, blending together the most significant and relevant aspects of the search results. The summary itself becomes the main result of your search...Sensebot attempts to understand what the result pages are about. It uses text mining to parse Web pages and identify their key semantic concepts. It then performs multidocument summarization of content to produce a coherent summary' (Sensebot, 2008)

http://www.sensebot.net/

(2) Provide more semantic filtering of information by quality

Hakia

'Hakia’s semantic technology provides a new search experience that is focused on quality, not popularity. hakia’s quality search results satisfy three criteria simultaneously: They (1) come from credible Web sites recommended by librarians, (2) represent the most recent information available, and (3) remain absolutely relevant to the query' (Hakia, 2009)

http://www.hakia.com/

(3) Search structured data on the web

SWSE

' There is already a lot of data out there which conforms to the proposed SW standards (e.g. RDF and OWL). Small vertical vocabularies and ontologies have emerged, and the community of people using these is growing daily. People publish descriptions about themselves using FOAF (Friend of a Friend), news providers publish newsfeeds in RSS (RDF Site Summary), and pictures are being annotated using various RDF vocabularies. [SWSE is] service which continuously explores and indexes the Semantic Web and provides an easy-to-use interface through which users can find the data they are looking for. We are therefore developing a Semantic Web Search Engine' (SWSE, 2009)

http://swse.deri.org/

Swoogle

'Swoogle is a search engine for the Semantic Web on the Web. Swoogle crawl the World Wide Web for a special class of web documents called Semantic Web documents, which are written in RDF' (Swoogle, 2007)

http://swoogle.umbc.edu/

Similar offering is;

http://watson.kmi.open.ac.uk/WatsonWUI/

(4) Search the 'real-time' web

One Riot

'OneRiot crawls the links people share on Twitter, Digg and other social sharing services, then indexes the content on those pages in seconds. The end result is a search experience that allows users to find the freshest, most socially-relevant content from across the realtime web....we index our search results according to their current relevance and popularity' (Oneriot, 2009)

http://www.oneriot.com/

Scoopler

'Scoopler is a real-time search engine. We aggregate and organize content being shared on the internet as it happens, like eye-witness reports of breaking news, photos and videos from big events, and links to the hottest memes of the day. We do this by constantly indexing live updates from services including Twitter, Flickr, Digg, Delicious and more.' (Scoopler, 2009)

http://www.scoopler.com/

Collecta

'Collecta monitors the update streams of news sites, popular blogs and social media, and Flickr, so we can show you results as they happen' (Collecta. 2009).

http://www.collecta.com/

(5) Search the 'deep web'

DeepDyve

'The DeepDyve research engine uses proprietary search and indexing technology to cull rich, relevant content from thousands of journals, millions of documents, and billions of untapped Deep Web pages.' 'Researchers, students, technical professionals, business users, and other information consumers can access a wealth of untapped information that resides on the "Deep Web" – the vast majority of the Internet that is not indexed by traditional, consumer-based search engines. The DeepDyve research engine unlocks this in-depth, professional content and returns results that are not cluttered by opinion sites and irrelevant content.... The KeyPhrase™ algorithm, applies indexing techniques from the field of genomics. The algorithm matches patterns and symbols on a scale that traditional search engines cannot match, and it is perfectly suited for complex data found on the Deep Web' (Deepdyve, 2009)

http://www.deepdyve.com/

Copyright © 2006-2008 Shane McLoughlin. This article may not be resold or redistributed without prior written permission.

Monday, May 18, 2009

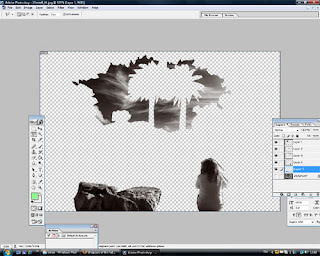

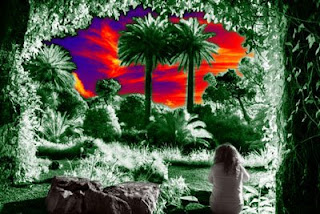

How to personalise images for illuminous desktop wallpaper

Here is my 'how to' for personalising the winning shot of the 'International Garden photo' competition over at Igpoty . The results should make some illuminous desktop wallpaper for your computer.

1. Open the following image (available at Igpoty ) in Photoshop :

2. Use the lasso tool to mark out and highlight the sky (3 pieces). You'll need to copy each piece separately, then paste to a new layer

3. When all layers are copied. Merge them them until you have 1 piece.

4. Next use the lasso tool to mark out and copy the girl. Paste to a new layer. Do the same for the rock.

5. You should now have 3 additional layers on top of the original. If you hide the original layer, you should see the following;

8. Finally, if you like, you can add additional effects such as rendering a 'Lens flare':

9. You may want to enlarge the original image to the resolution of your desktop before saving.

10. Choose 'save for web' and save as a Jpeg image.

11. And thats it, you should have a terrific personalised desktop wallpaper or screensaver.

Here is a link to download 1200x1800 resolution images of the examples above;

http://rapidshare.com/files/234404204/screensavers.zip.html

Copyright © 2009 Shane McLoughlin. This article may not be resold or redistributed without prior written permission.

1. Open the following image (available at Igpoty ) in Photoshop :

2. Use the lasso tool to mark out and highlight the sky (3 pieces). You'll need to copy each piece separately, then paste to a new layer

3. When all layers are copied. Merge them them until you have 1 piece.

4. Next use the lasso tool to mark out and copy the girl. Paste to a new layer. Do the same for the rock.

5. You should now have 3 additional layers on top of the original. If you hide the original layer, you should see the following;

6. You can now very lightly feather these new layers (1px or 2px max) so that they will eventually blend better with the original layer.

7. Use the 'Gradient Map' in 'Adjustments' to create a gradient of colours for both the original layer and the 'sky' layer. Below are example's of the finished product:

8. Finally, if you like, you can add additional effects such as rendering a 'Lens flare':

9. You may want to enlarge the original image to the resolution of your desktop before saving.

10. Choose 'save for web' and save as a Jpeg image.

11. And thats it, you should have a terrific personalised desktop wallpaper or screensaver.

Here is a link to download 1200x1800 resolution images of the examples above;

http://rapidshare.com/files/234404204/screensavers.zip.html

Copyright © 2009 Shane McLoughlin. This article may not be resold or redistributed without prior written permission.

Labels:

background,

desktop,

howto,

personalise,

photoshop,

wallpaper

Subscribe to:

Posts (Atom)